What you read in this article:

Volatile Keyword in Java: An Introduction

I’m Arsalan Mirbozorgi,

All sorts of optimizations can be applied if the compiler, runtime, or processors do not have the requisite synchronizations in place. Despite the fact that these optimizations are generally advantageous, they might occasionally cause minor problems. Optimizations such as caching and reordering may surprise us in concurrent environments. The Volatile Keyword in Java and the JVM is one of the numerous techniques to manage memory order.

The Volatile Keyword is a fundamental but frequently misunderstood notion in the Java language that we’ll cover in this essay. We’ll first cover the basics of computer architecture, and then we’ll get acquainted with Java’s memory order.

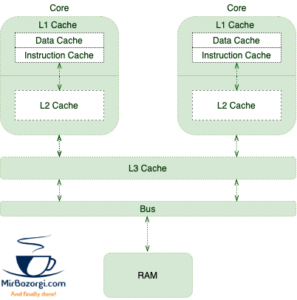

Shared Multiprocessor Architecture

Processors execute program instructions in a shared multiprocessor architecture. It is thus imperative that program instructions and data be retrieved from memory.

A large number of instructions per second means that requesting data from RAM isn’t suitable for CPUs. This circumstance necessitates using techniques such as Branch Prediction, Speculative Execution, and Caching to increase performance.

The following memory hierarchy comes into play:

As more instructions and data are processed, the caches of different cores become populated with more relevant data and instructions. Cache coherence issues will be introduced as a trade-off for increased overall performance.

When one thread updates a cached value, we should pause and consider the consequences.

When to use a volatile

Let’s steal an example from the book Java Concurrency in Practice to understand cache coherence better:

public class TaskRunner {

private static int number;

private static boolean ready;

private static class Reader extends Thread {

@Override

public void run() {

while (!ready) {

Thread.yield();

}

System.out.println(number);

}

}

public static void main(String[] args) {

new Reader().start();

number = 42;

ready = true;

}

}

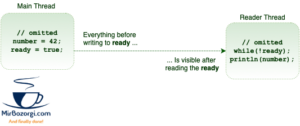

The TaskRunner class keeps two simple variables. It generates a second thread in its main method that runs on the ready variable until it is set to false. It will print the number if the variable is true at any point in the thread.

People might think that after a short pause, the program would simply print 42 as a result. It is possible, however, that the time lag will be considerably longer. Even if it doesn’t print anything at all, there’s still a risk.

The absence of memory visibility and reordering is to blame for these oddities. Let’s examine them more closely.

Accessibility of Information Stored in the Memory

The main thread and the reader thread are the two application threads in this simple example. Two CPU cores might be used for this scenario, where the OS would arrange the tasks on the two different cores.

- The core cache of the main thread contains a copy of the ready and number variables.

- Readers will have their own specific copy of the thread at the end of the day.

- The main thread updates the values in the cache.

The majority of current CPUs don’t immediately apply to write requests. Writes are really queued in a write buffer by processors. These write operations will all be applied to the main memory at once after a short period.

There is no full guarantee that the reader thread will see the same number and ready variables as the main thread. As a result, the reader thread can see the modified value immediately, or it may not work at all!

Programs that rely on memory visibility may experience problems as a result of this new memory visibility.

Reordering

Adding insult to injury, the reader thread may perceive the writes in any order other than the program’s real sequence. To illustrate this point, let’s assume that the reader thread prints 42. It is feasible to print zero as the value!

Reordering is a performance-improving optimization approach. Intriguingly, this improvement can be applied to a variety of components:

public static void main(String[] args) {

new Reader().start();

number = 42;

ready = true;

}

- The processor’s write buffer can be flushed in any order other than the order in which the program is written.

- The CPU may apply Out-of-order execution.

- By reordering the JIT compiler can optimize

Volatile Memory Order

We should utilize the volatile modifier to assure that alterations to parameters are communicated predictably to other threads:

public class TaskRunner {

private volatile static int number;

private volatile static boolean ready;

// same as before

}

We communicate with the runtime and processor to ensure that volatile variables are not reordered. These variables should be flushed immediately by processors, as well.

Volatility and synchronization of threads

In order to ensure that multithreaded applications behave consistently, we must follow a few rules:

- Mutual Exclusion – only one thread at a time can perform a vital part.

- In order to ensure data consistency, all changes made by one thread to the shared data are visible to other threads.

In order to achieve both of these qualities, synchronized methods and blocks must be used.

The term “volatile” is helpful because it ensures that the data change is visible but does not guarantee mutual exclusion. As a result, it is beneficial in situations where numerous threads are allowed to execute a block of code simultaneously, but the visibility property must be ensured.

Happens-Before Ordering

There is a broader impact on memory visibility that goes beyond just the volatile variables.

Thread A is writing to a volatile variable, and subsequently, Thread B is reading from the same variable. After reading the volatile variable, the values that were visible to A before writing the volatile variable will be visible to B.

Writing to a volatile field occurs before reading from that same volatile field, technically speaking. This is the Java Memory Model’s “volatile variable” rule (JMM).

Piggybacking

It’s possible to take advantage of another volatile variable’s visibility because of the order in which things happen in memory. For example, we only need to mark the ready variable as volatile in our particular scenario:

public class TaskRunner {

private static int number; // not volatile

private volatile static boolean ready;

// same as before

}

Before writing true to the ready variable, anything that is visible to anything that reads the ready variable is visible. Therefore, the number variable takes advantage of the ready variable’s mandatory memory visibility. However, even though it is a stable variable, it is showing unpredictable behavior.

Using these semantics, we can limit the number of volatile variables in our class while still providing a high level of visibility.

Conclusion – Volatile Keyword

When it comes to the Volatile Keyword, we’ve covered a lot of ground in this tutorial, including its possibilities and how it’s been improved since Java 5.